Transforming tables that require additional information within the same dataset: analytic functions like ordering, grouping, numbering rows, running totals.The use of staging within a warehouse is the defining characteristic of an Extract, Load, Transform (ELT) process, separating it from ETL. Staging tables enable indexing downstream data for quicker queries, investigating intermediate transformations (for troubleshooting, auditing, or otherwise), and potentially reusing staged data in multiple sources. By exposing only cleaned and prepared data to stakeholders, data teams can curate a single source of truth, reduce complexity, and mitigate data sprawl. A final layer pulls from the staging layer for 'presentation' to BI tooling and business users. The raw tables are transformed, cleaned, and normalized in an ' ELT staging area'. This staging involves creating raw tables, separate from the rest of the warehouse. Modern approaches to unified cloud data warehouses often utilize a separate, internal staging process. Operations dependent on existing data must be performed, like MERGE or UPSERT.Real-time or event streaming data must be transformed- engineers often need to handle late-arriving data or other edge cases.The volume of raw data exceeds that which can reasonably be stored in a data warehouse.Complex transformations must be performed that are ill-suited for SQL.Additionally, such data records are incredibly useful for auditing purposes and serve as the foundation for data lineage management.Įxternal staging may be useful in cases where: So long as the raw data and transformation knowledge are retained, it’s possible to fully recover any lost information. Amassing data in a staging area allows for future retrieval/replication of production data in the event of a failure. Often, staged data is stored in a raw format, like JSON or Parquet, that’s either determined by the source or compressed and optimized specifically for staging. In this staging process, a data engineer is responsible for loading data from external sources, collecting event streaming data, and/or performing simple transformations to clean data before it’s loaded into a warehouse. The traditional data staging area lives outside a warehouse, often in a cloud storage provider, like Google Cloud Storage (GCS) or Amazon Web Simple Storage Solution ( AWS S3). Of course, 'staging' is an inherently vague term- it can be used to describe the traditional ' staging area' for data living outside a warehouse or the more modern staging process living inside a warehouse.

Data sprawl can lead to confused business users and analysts, degrading the impact of even well-maintained sources. Having a single source of staged data also reduces ' data sprawl'- a term used to describe a landscape in which data is scattered (and potentially duplicated), eliminating a single source of truth.

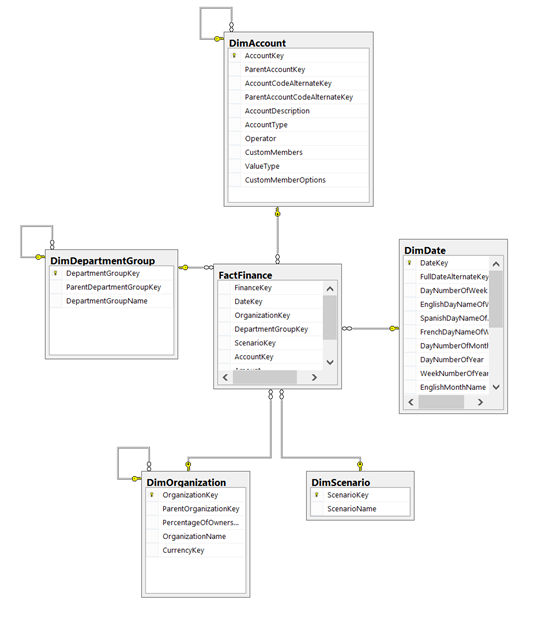

The staging approach allows for a number of benefits, including: testing source data, mitigating the risk of a pipeline failure, creating an audit trail, and performing complex or computationally intense transformations. The basic data staging layer is an intermediate step that sits between the data’s source(s) and target(s). What is Data Staging?Īn example data warehouse with a staging area and data marts. Often times, these staging areas directly correspond with the process of retrieving data from raw source systems into a ' single source-of-truth', defining a protocol for cleansing and curating the data, and providing methods to access the data from target data services and applications.

Some teams will choose to have multiple staging areas in both locations. You can read more about the differences between ELT/ETL here. The staging location is dependent on whether data is first loaded or transformed. This article will discuss staging in the context of the modern cloud storage system, and the relationship between data environments and the software release lifecycle.ĭepending on the transformation process, staging may take place in or outside the data warehouse. Data Staging is a pipeline step in which data is 'staged' or stored, often temporarily, allowing for programmatic processing and short-term data recovery. Most data teams rely on a process known as ETL ( Extract, Transform, Load) or ELT ( Extract, Load, Transform) to systematically manage and store data in a warehouse for analytic use.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed